Evita, AI creative director and founder of Studio Witzenhausen, on specificity as a core skill, the limits of photorealism, and why design fundamentals are non-negotiable.

Evita is an AI creative director and the founder of Studio Witzenhausen, a creative studio specialising in high-end AI-generated visuals for fashion and lifestyle brands. With a background in graphic design and photography, she brings a trained eye for light, composition, and storytelling to every project, directing AI tools the way she once directed a shoot. Where traditional production ends, her process begins. Alongside her studio work, she runs HAUS of AI, an education brand where she teaches creatives how to prompt, direct, and produce campaign-quality visuals using AI. She is based in Amsterdam.

Evita is an AI creative director and the founder of Studio Witzenhausen, a creative studio specialising in high-end AI-generated visuals for fashion and lifestyle brands. With a background in graphic design and photography, she brings a trained eye for light, composition, and storytelling to every project, directing AI tools the way she once directed a shoot. Where traditional production ends, her process begins. Alongside her studio work, she runs HAUS of AI, an education brand where she teaches creatives how to prompt, direct, and produce campaign-quality visuals using AI. She is based in Amsterdam.

Background & Craft

Was there a specific moment that shifted things for you?

It was gradual and then suddenly very fast. I had a background in graphic design and photography, so I already understood light, composition, and how images are made. When I first experimented with AI tools, my immediate frustration was that the results looked nothing like how a photographer actually thinks about a scene. The shift happened when I stopped using the tools like a search engine and started directing them the way I’d direct a photo shoot.

What did those six months of experimenting teach you that most people haven’t figured out yet?

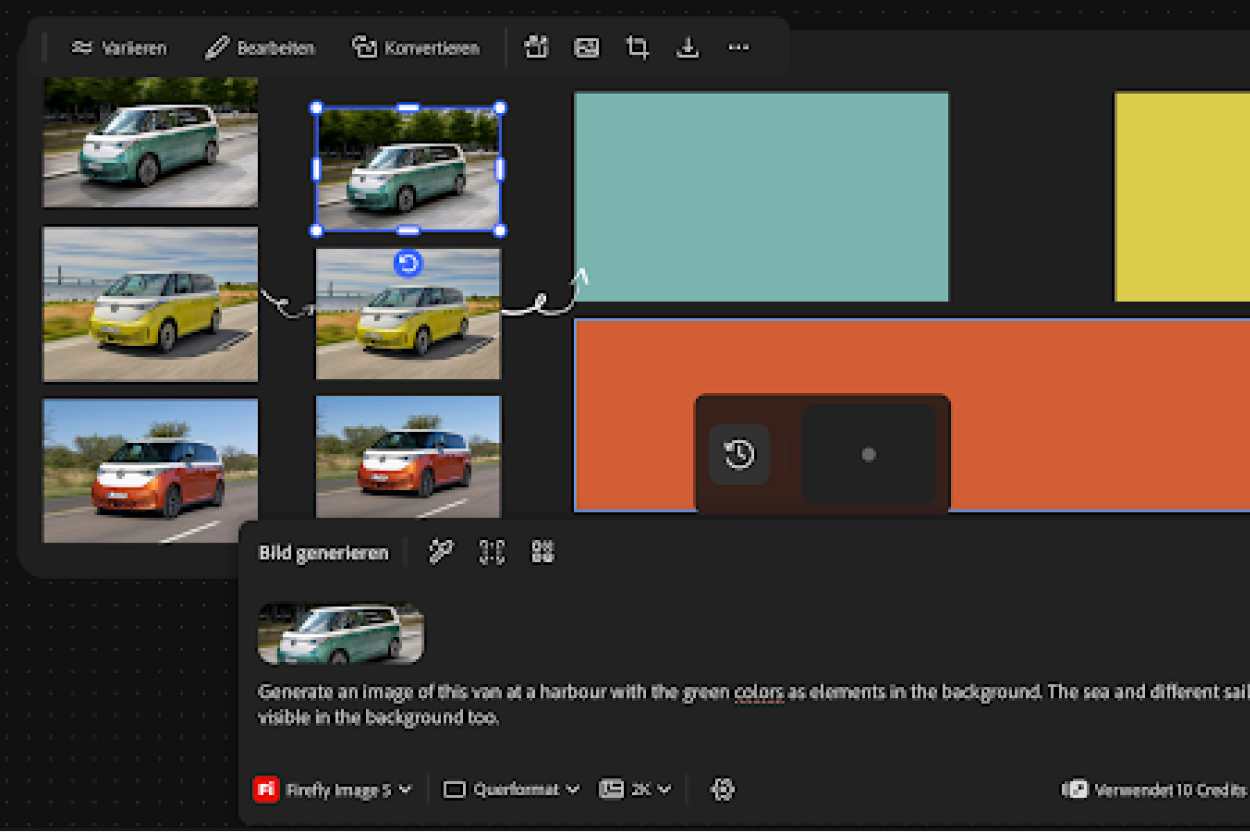

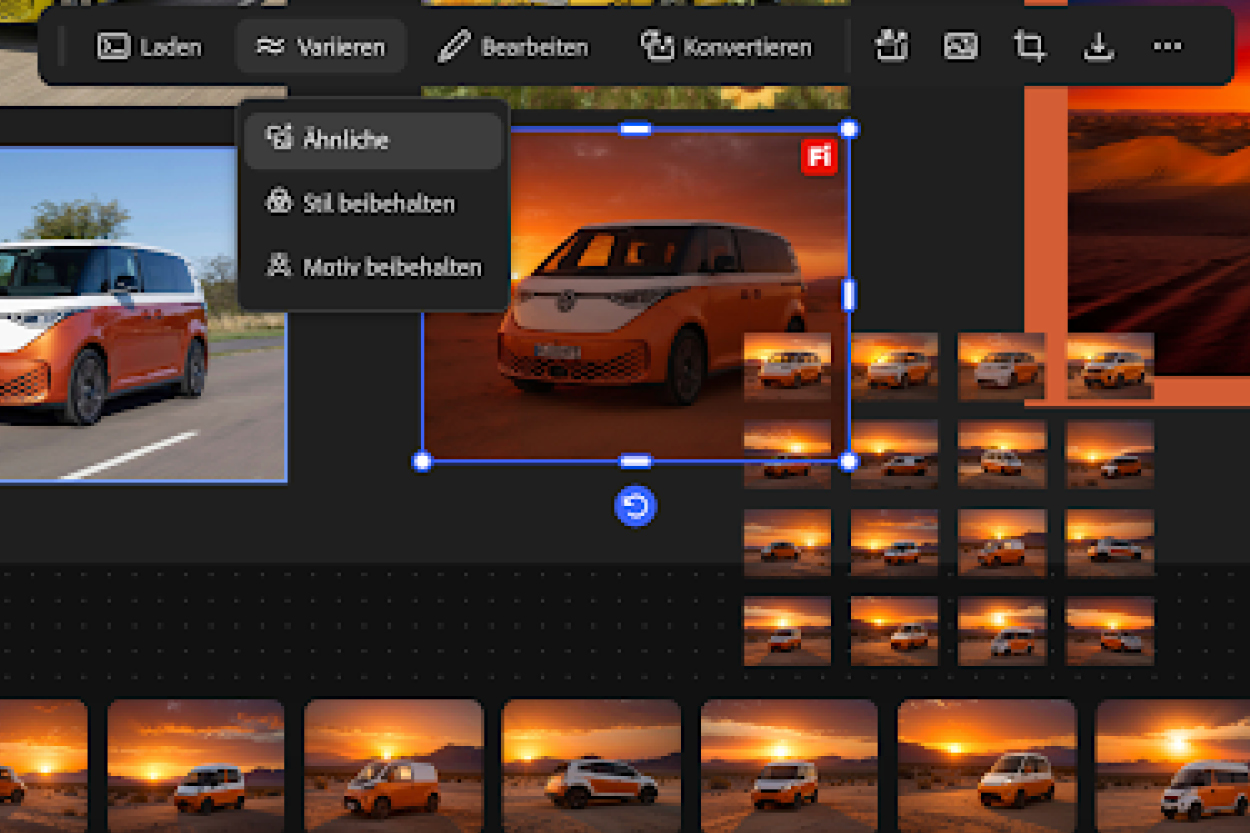

That specificity is everything. Most people write vague prompts and hope for the best. What I learned is that AI responds to the same language a photographer understands, light direction, lens choice, colour temperature, fabric texture etc. The other big thing: reference images carry more information than words ever will. Once I built a structured workflow around that the results became consistent and actually usable for client work.

Where’s the line between creative director and prompt writer?

A prompt writer translates an idea into text. A creative director starts with intention, what feeling should this image create, what story does it tell, who is it for, what should it not say. Coming from photography specifically, I know what a real image costs, the decisions made on set, the light you wait for, the moment you catch. AI doesn’t have that instinct. It generates. I direct. That editorial judgment, knowing when to stop, when to push, when the image is actually wrong even though it looks right, that’s the part that’s mine.

The Process

Walk me through what happens before you hit generate.

I start with the brief, what the brand needs, what emotion it should carry, what references exist. Then I build a visual direction: mood, colour palette, light quality, model type, setting. I think about what the image needs to do before I think about what it needs to look like. Only once I have that locked down do I start building prompts. By the time I hit generate, I already know what I’m looking for.

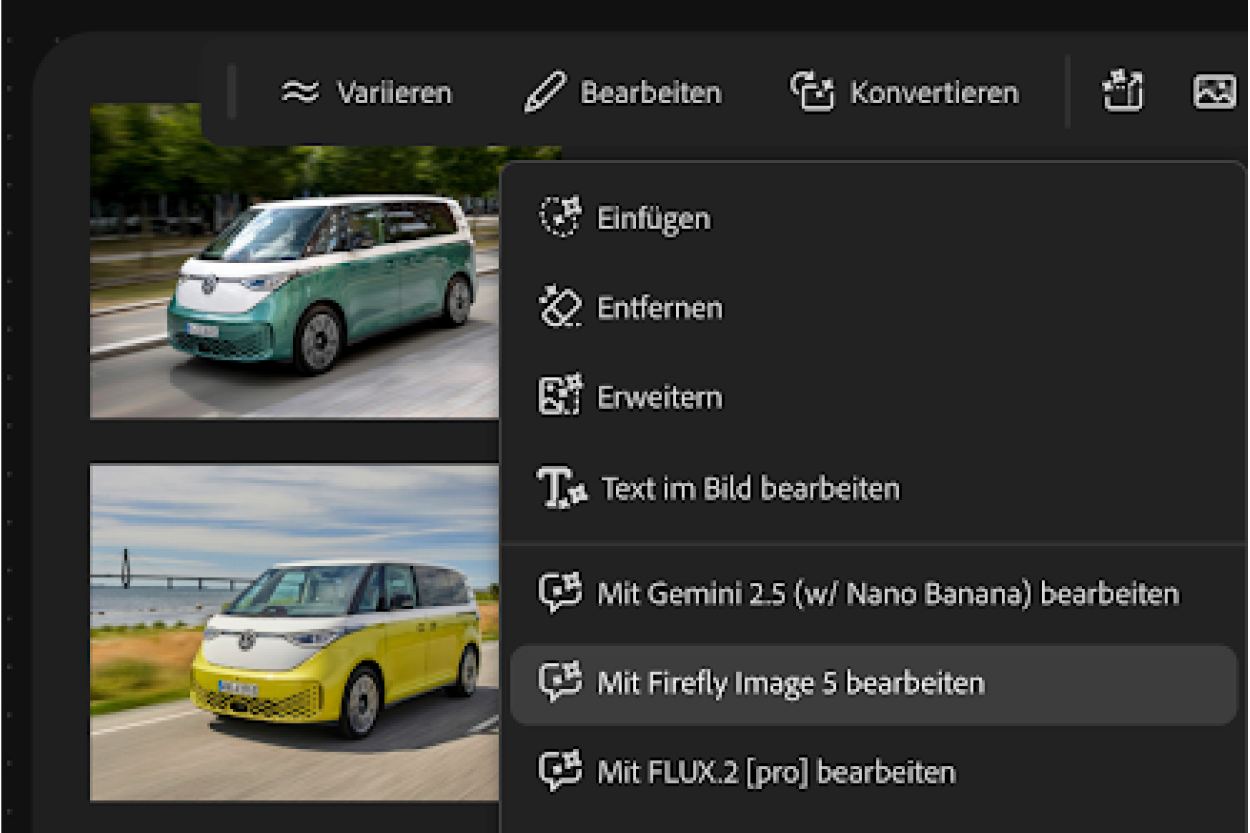

Favourite tool right now, and a prompt example?

Nano Banana 2 is my current favourite for fashion and model work, the realism it produces is genuinely impressive and it handles fabric, skin, and light in a way other tools still struggle with. A prompt structure that consistently works for me follows this logic: subject → form → material → colour → detail → setting → lighting → camera specs → mood keywords.

How do you stay current without drowning in the noise?

When a new tool drops or a significant update comes out, I test it myself. If it improves my workflow, it stays. If it doesn’t, it goes. I’m not interested in collecting tools. I’m interested in what actually produces better results for clients. Most of what gets hyped on social media doesn’t survive contact with a real brief.

Photorealism & Bias

How do you navigate AI’s tendency to default to normative beauty standards?

AI defaults to perfect, perfect skin, perfect symmetry, perfect proportions. That’s actually the problem. Perfect doesn’t feel real, and real is what makes an image land. So I deliberately direct imperfection into every image, a natural skin texture, an asymmetric feature, a body that looks lived in. The same way a photographer doesn’t over-retouch, I don’t let the tool over-perfect. That’s where the human direction matters most. If you’re not making active choices, the tool is making them for you and its default is always going to be the same narrow, polished ideal.

Have tools resisted your direction and kept defaulting anyway?

Yes, constantly in the early days, less so now as the tools have improved. When it happened, I’d approach it like a correction conversation: isolate the element that was wrong, be specific about what needed to change, and rebuild from that locked baseline. The mistake most people make is rewriting the whole prompt when one element is off. You fix one thing at a time. It’s the same logic as giving feedback on set.

Where’s the line between photorealism and deception? Do you label your work?

Yes, I label everything. Always. That’s non-negotiable for me, ethically and commercially. But I also think the deception conversation is worth reframing. When photography shifted from analogue to digital, nobody called a digital photo deceptive. AI is another shift in tooling, a different way to direct and create an image. The medium changed, the craft didn’t disappear.

That said, transparency matters. My clients know exactly what they’re getting, and I think audiences deserve to know too. So I label it, not because I’m ashamed of the medium, but because honesty about how an image is made is just part of doing this with integrity.

The Industry & Bigger Picture

Is AI production actually compatible with purpose-led brand ethics?

Honestly, it’s a tension I sit with and I don’t think there’s a clean answer. Purpose-led brands care about how things are made, who’s in the room, where the budget goes, what the production footprint looks like. Those are real questions.

And yes, AI has a footprint too. Running these models consumes energy and water. I’m not going to pretend otherwise or use sustainability as a one-sided argument for AI production.

What I will say is that flying a crew to a location, booking models, shipping product, running a full production, that also has a significant footprint. Neither is innocent. The honest conversation is about trade-offs, not about one being categorically better than the other.

What AI does offer smaller brands is access to campaign-quality visuals they simply couldn’t afford to produce otherwise. That’s a different kind of value. And whether you’re working with AI or a traditional crew, the same questions apply: who is represented, what does this imagery say, are we making conscious choices? That’s not something the tool figures out. That’s creative direction.

What would you tell a young graphic designer today — dive into AI early, or master the craft first?

Master the craft first, definitely. My graphic design and photography background is the reason I can do this work well. Understanding light before you prompt about light. Understanding what makes a composition feel alive before you ask AI to build one. The foundation isn’t optional; it’s what separates someone who directs AI from someone who just generates with it. AI is a multiplier. It amplifies whatever visual intelligence you already have. Learn to see first. Then learn to direct.

Social Mediavor 3 Monaten

Social Mediavor 3 Monaten

Entwicklung & Codevor 2 Monaten

Entwicklung & Codevor 2 Monaten

Künstliche Intelligenzvor 2 Monaten

Künstliche Intelligenzvor 2 Monaten

Apps & Mobile Entwicklungvor 2 Monaten

Apps & Mobile Entwicklungvor 2 Monaten

Social Mediavor 2 Monaten

Social Mediavor 2 Monaten

Künstliche Intelligenzvor 2 Monaten

Künstliche Intelligenzvor 2 Monaten

Evita is an AI creative director and the founder of

Evita is an AI creative director and the founder of